Claude Managed Agents: What Actually Ships

Anthropic launched hosted agent infrastructure that handles sandboxing, orchestration, and crash recovery. Here is what ships today and where the lock-in risks live.

Anthropic's Managed Agents announcement pulled 2 million views in under two hours. One developer's response on X: "there goes a whole YC batch." That reaction tells you everything about what this product is actually targeting.

This is not a new model. It is hosted infrastructure that handles sandboxing, orchestration, state management, and crash recovery. The operational complexity that typically adds three to six months between "my agent works in a demo" and "my agent runs reliably in production" is what Anthropic is now selling as a service.

What Claude Managed Agents Actually Provides

Every team that gets past the prototype phase hits the same wall. Your agent works in a notebook. Then you need sandboxed execution environments, credential management, session persistence, error recovery, and observability. That is months of engineering before any user sees anything useful. Managed Agents is Anthropic's answer to that wall.

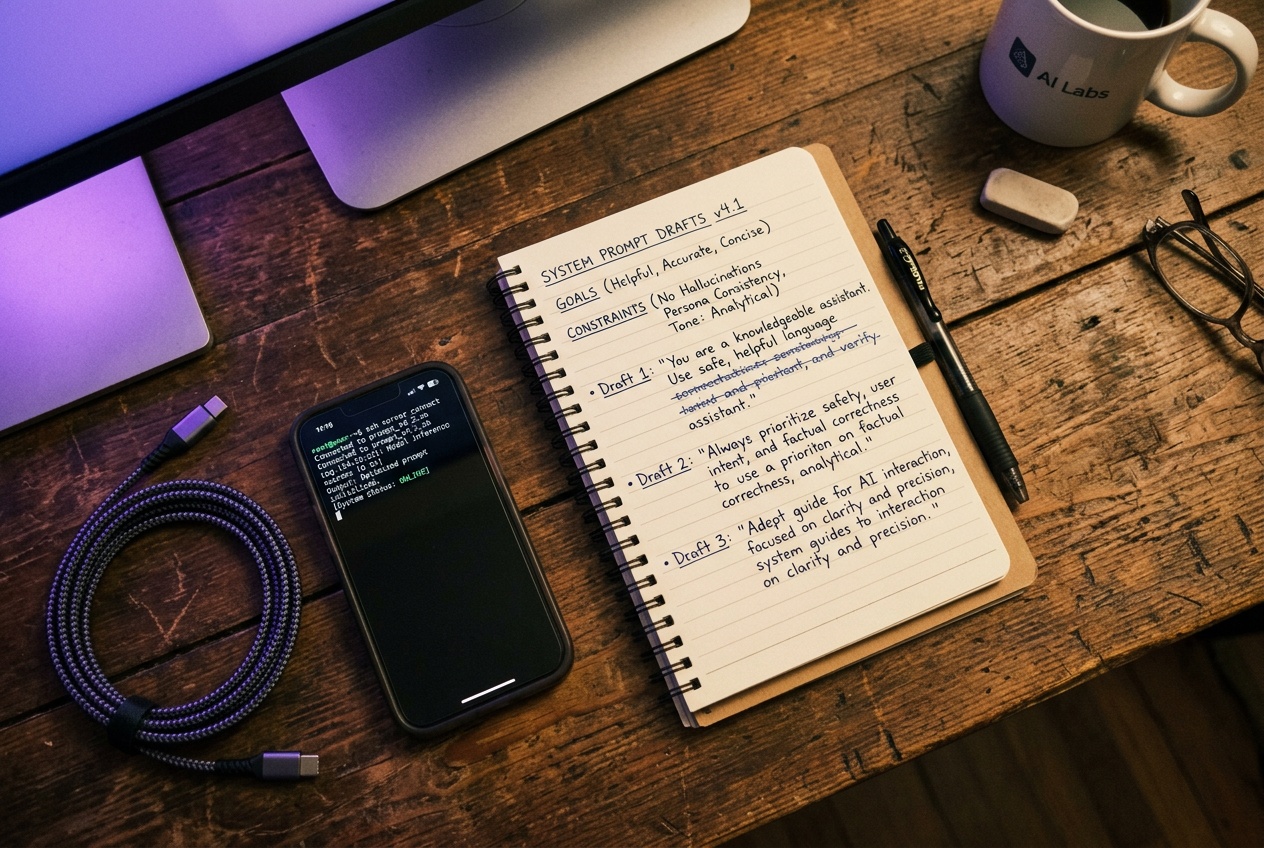

The architecture has three moving parts. First, an agent definition: you pick a model (Sonnet 4.6, Opus 4.6, or Haiku 4.5), write a system prompt, attach tools and MCP servers, and save it with an ID. Second, an environment configuration: a cloud container with Python, Node.js, and Go pre-installed, with network access rules you control. Third, session management: a persistent, append-only event log that tracks every tool call and output across a session that can run for hours without you babysitting it.

The key primitive is agent_toolset_20260401. Drop that into your tool list and you get bash execution, file operations, web search, and code execution inside an isolated Linux container. Add MCP servers for Slack, GitHub, or Google Drive and the agent can touch your actual systems, not just demo data.

What separates this from the Messages API with tool use is the orchestration layer. Anthropic's framing is direct: Managed Agents is a meta-harness with general interfaces that can accommodate many different harnesses. Claude Code is one example. The point is that you do not rebuild the agent loop every time the underlying model improves or when context management strategies change. Anthropic owns that migration.

How This Compares to Building Your Own Agent Runtime

If you are already running the Messages API with tool use, you have probably written something that looks like an agent loop: call Claude, parse tool calls, execute them, feed results back, repeat until done. That works fine at small scale. It breaks in interesting ways when sessions run for hours, when the container crashes mid-task, or when you need to inspect what happened three hours ago on a job that is still running.

Managed Agents handles all of that. Sessions persist through disconnections. The event log is immutable and queryable. You can reconnect to a running session, check its state, and inject new instructions without restarting the whole thing.

The Agent SDK and Messages API are still the right choice when you need fine-grained control over the agent loop, when you are running on your own infrastructure for compliance reasons, or when you are building something that does not fit Anthropic's general-purpose container model. Managed Agents trades control for operational simplicity. That is a reasonable trade for most business applications. It is the wrong trade if your security team needs to audit every layer of the stack or if you are building something with unusual resource requirements.

From where I sit building automations for small businesses and e-commerce operators, the honest comparison is this: a custom n8n workflow with a Claude node and a Supabase backend gives you more flexibility and costs less per run at low volume. Managed Agents makes sense when the task complexity is high enough that the agent needs to make dozens of decisions, run arbitrary code, and recover from failures without human intervention. For a workflow that runs the same five steps every morning, it is overkill.

The Lock-In Question and What It Means in Practice

The risk here is real and worth naming clearly. When Anthropic owns your agent loop, your environment, and your session state, switching costs are high. If pricing changes, if the beta API breaks, or if Anthropic deprecates the managed-agents-2026-04-01 header in eighteen months, you are doing a rewrite, not a config change.

That is not a reason to avoid it. It is a reason to go in with clear eyes. Most teams that build their own agent runtime end up with something that is harder to maintain and less reliable than a managed service, because agent infrastructure is genuinely hard and it is not your core product. The same calculus applies to running your own Postgres versus using a hosted database. You give up control to get reliability and speed.

The beta status is the real constraint right now. The managed-agents-2026-04-01 header requirement signals that the API surface is not stable. Anything you build today should be behind an abstraction layer thin enough to swap out if the interface changes. Do not let the agent definition IDs or session management patterns leak into your core business logic.

For business owners evaluating this: the pitch is that you can deploy a reliable autonomous agent, one that scans your codebase for security issues, processes supplier invoices, or monitors your ad campaigns, without hiring someone to build and maintain the infrastructure underneath it. That pitch is credible. The execution risk is that you are betting on a beta product from a company that is moving fast enough that two-year-old documentation is already outdated.

The 3-6 month gap between demo and production is real. Whether a managed service is the right way to close it depends on what you are willing to own and what you are willing to trust someone else with.