AI Jargon, Translated for People Who Run Businesses

TechCrunch published an AI glossary for general readers. Here's what those terms actually mean when you're deciding whether to build something with them.

TechCrunch just published a glossary of common AI terms. It's accurate, it's written for a general audience, and it will not help you make a single business decision.

That's not a dig. The piece does what it's supposed to do. But there's a gap between knowing what a large language model is and knowing whether to put one in your order processing pipeline. That gap is where most small business owners get stuck.

What the Technical Definitions Get Right (and Miss)

The TechCrunch glossary covers the usual suspects: LLMs, hallucinations, AGI, chain-of-thought reasoning, AI agents. The definitions are solid. Chain-of-thought is well explained. The AGI section is honest about how contested the term is.

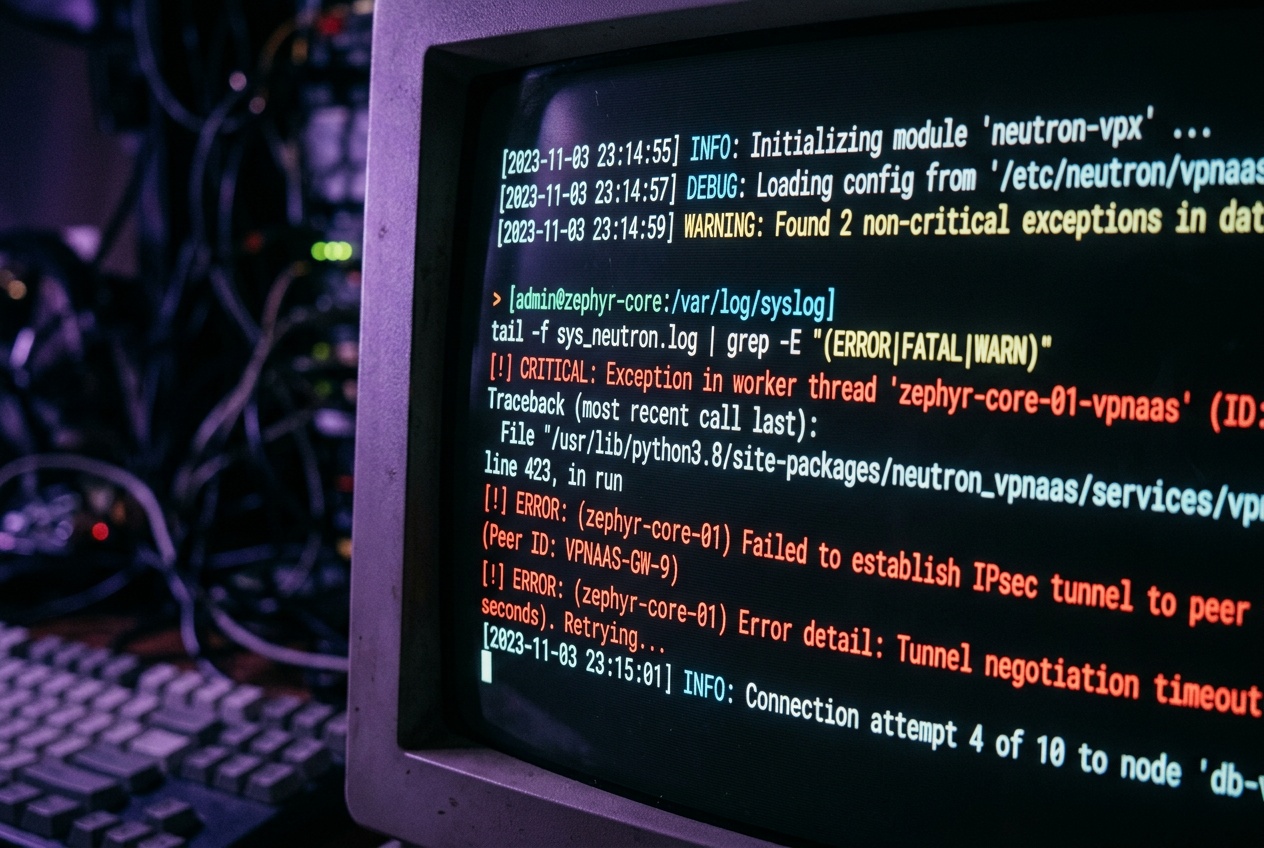

But glossaries describe categories. They don't describe consequences. Knowing that an AI agent is "an autonomous system that may draw on multiple AI systems to carry out multistep tasks" tells you nothing about what happens when it fails at step three of seven and no one notices for a week.

The term that matters most for anyone building with these tools right now is hallucination. The glossary will define it as the model generating confident, plausible-sounding output that is factually wrong. What the glossary won't tell you is that hallucinations are not bugs you can patch. They are a property of how these systems work. You don't fix hallucinations. You build around them.

How These Terms Map to Real Automation Decisions

Here's how I think about the key terms when I'm scoping a project for a client.

LLM is a text-in, text-out system. It's good at transforming language: summarizing a customer review, classifying a support ticket, drafting a reply in a specific tone. It is not a database. It does not remember things between calls unless you build memory into the system yourself. Every time you call it, it starts fresh unless you explicitly pass context.

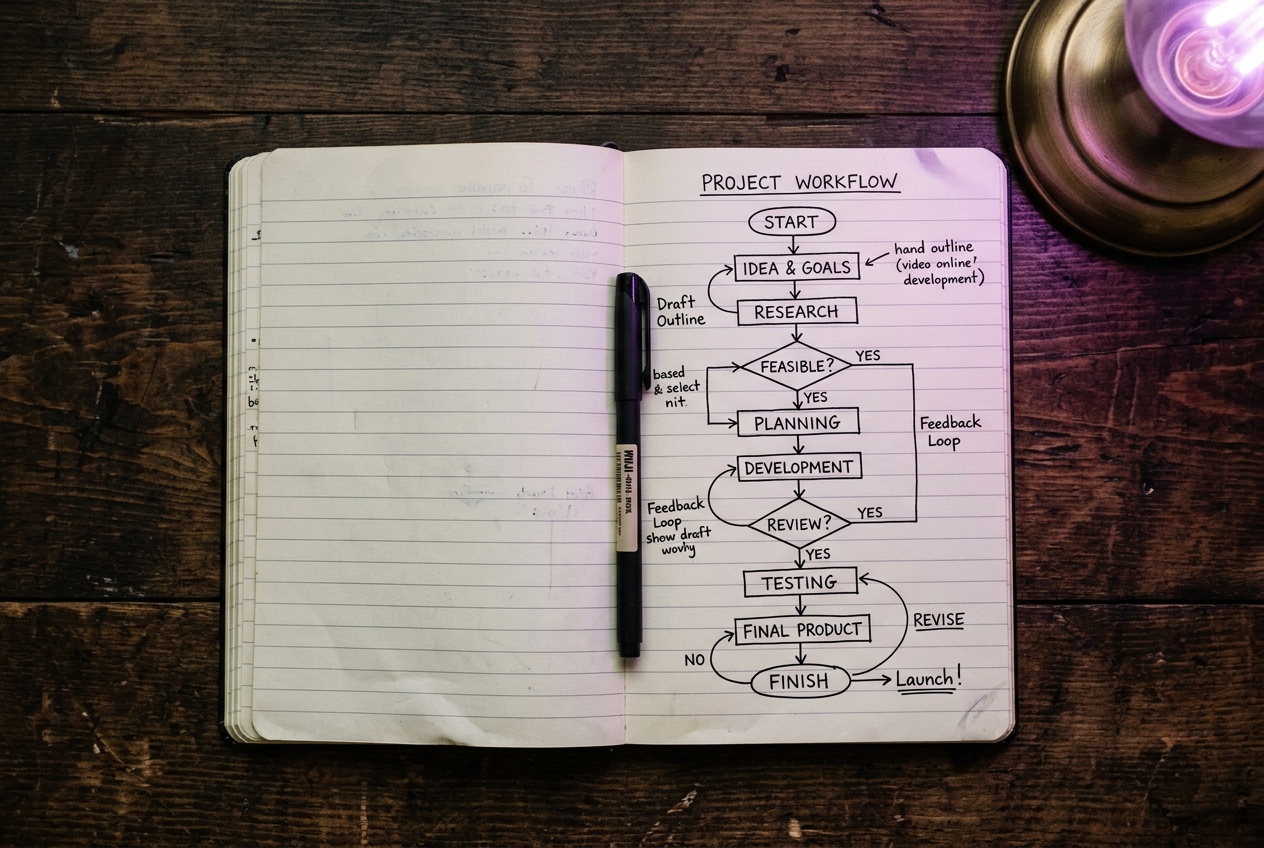

AI agent is a workflow where an LLM is given tools and allowed to decide which ones to use. In practice, for a small business, this usually means something like: read the incoming order email, check the inventory spreadsheet, and draft a fulfillment confirmation. The "agent" part just means the model is choosing the sequence, not a human. The catch is that agents can go sideways in ways that a deterministic workflow cannot. A regular n8n workflow does exactly what you wired. An agent interprets. Interpretation introduces variance.

Hallucination is the reason I don't let LLMs write anything that goes out to customers without a review step, unless the output is tightly constrained. If I'm generating a product description from structured data, the model has very little room to invent something. If I'm asking it to summarize a free-form conversation, it has a lot of room. The more open the prompt, the more you need a human checkpoint or a validation layer before the output goes anywhere consequential.

Chain-of-thought matters when you're asking the model to do something with logical steps: categorize this complaint and suggest which department should handle it, or extract these five fields from this invoice and flag anything missing. Turning on chain-of-thought (or using a reasoning model) slows things down and costs more tokens, but it makes the output meaningfully more reliable for anything that has right and wrong answers. For pure text generation, it usually doesn't help much.

AGI is not relevant to your business right now. It's a useful concept to understand the direction the field is going, but nobody is building production workflows around it. Ignore any vendor who leads with it.

What This Means If You're Thinking About Using Any of It

The practical question is never "do I understand what an LLM is." It's "where in my current process would replacing a human decision with a language model call introduce acceptable risk, and where would it introduce unacceptable risk."

Classifying inbound emails by type: low risk, high value. Automatically responding to a customer complaint with no human review: high risk, probably not worth it yet. Extracting structured data from messy supplier invoices: medium risk, very manageable if you build a validation step. Generating first drafts of product descriptions for someone to review before publishing: low risk, good return on investment.

The glossary gives you vocabulary. Vocabulary is the start. What you actually need to know is where the failure modes live, because that's what determines whether a given integration makes your operation faster or just introduces a new category of error to clean up.

Most of the AI hype is about capability. The useful questions are about failure.